While we try to keep things accurate, this content is part of an ongoing experiment and may not always be reliable.

Please double-check important details — we’re not responsible for how the information is used.

Computer Modeling

A New Neural Network Paradigm: Unlocking Memory Retrieval with Input-Driven Plasticity

Listen to the first notes of an old, beloved song. Can you name that tune? If you can, congratulations — it’s a triumph of your associative memory, in which one piece of information (the first few notes) triggers the memory of the entire pattern (the song), without you actually having to hear the rest of the song again. We use this handy neural mechanism to learn, remember, solve problems and generally navigate our reality.

Computer Modeling

Unveiling the Hidden Power of Quantum Computers: Scientists Discover Forgotten Particle that Could Unlock Universal Computation

Scientists may have uncovered the missing piece of quantum computing by reviving a particle once dismissed as useless. This particle, called the neglecton, could give fragile quantum systems the full power they need by working alongside Ising anyons. What was once considered mathematical waste may now hold the key to building universal quantum computers, turning discarded theory into a pathway toward the future of technology.

Computer Graphics

Cracking the Code: Scientists Breakthrough in Quantum Computing with a Single Atom

A research team has created a quantum logic gate that uses fewer qubits by encoding them with the powerful GKP error-correction code. By entangling quantum vibrations inside a single atom, they achieved a milestone that could transform how quantum computers scale.

Civil Engineering

A Groundbreaking Magnetic Trick for Quantum Computing: Stabilizing Qubits with Exotic Materials

Researchers have unveiled a new quantum material that could make quantum computers much more stable by using magnetism to protect delicate qubits from environmental disturbances. Unlike traditional approaches that rely on rare spin-orbit interactions, this method uses magnetic interactions—common in many materials—to create robust topological excitations. Combined with a new computational tool for finding such materials, this breakthrough could pave the way for practical, disturbance-resistant quantum computers.

-

Detectors1 year ago

Detectors1 year agoA New Horizon for Vision: How Gold Nanoparticles May Restore People’s Sight

-

Cancer1 year ago

Cancer1 year agoRevolutionizing Quantum Communication: Direct Connections Between Multiple Processors

-

Earth & Climate1 year ago

Earth & Climate1 year agoRetiring Abroad Can Be Lonely Business

-

Albert Einstein1 year ago

Albert Einstein1 year agoHarnessing Water Waves: A Breakthrough in Controlling Floating Objects

-

Earth & Climate1 year ago

Earth & Climate1 year agoHousehold Electricity Three Times More Expensive Than Upcoming ‘Eco-Friendly’ Aviation E-Fuels, Study Reveals

-

Chemistry1 year ago

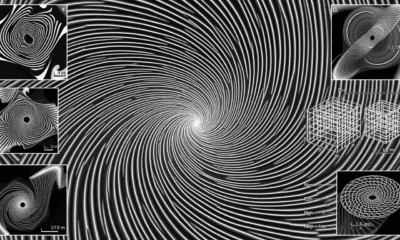

Chemistry1 year ago“Unveiling Hidden Patterns: A New Twist on Interference Phenomena”

-

Diseases and Conditions1 year ago

Diseases and Conditions1 year agoReducing Falls Among Elderly Women with Polypharmacy through Exercise Intervention

-

Agriculture and Food1 year ago

Agriculture and Food1 year ago“A Sustainable Solution: Researchers Create Hybrid Cheese with 25% Pea Protein”